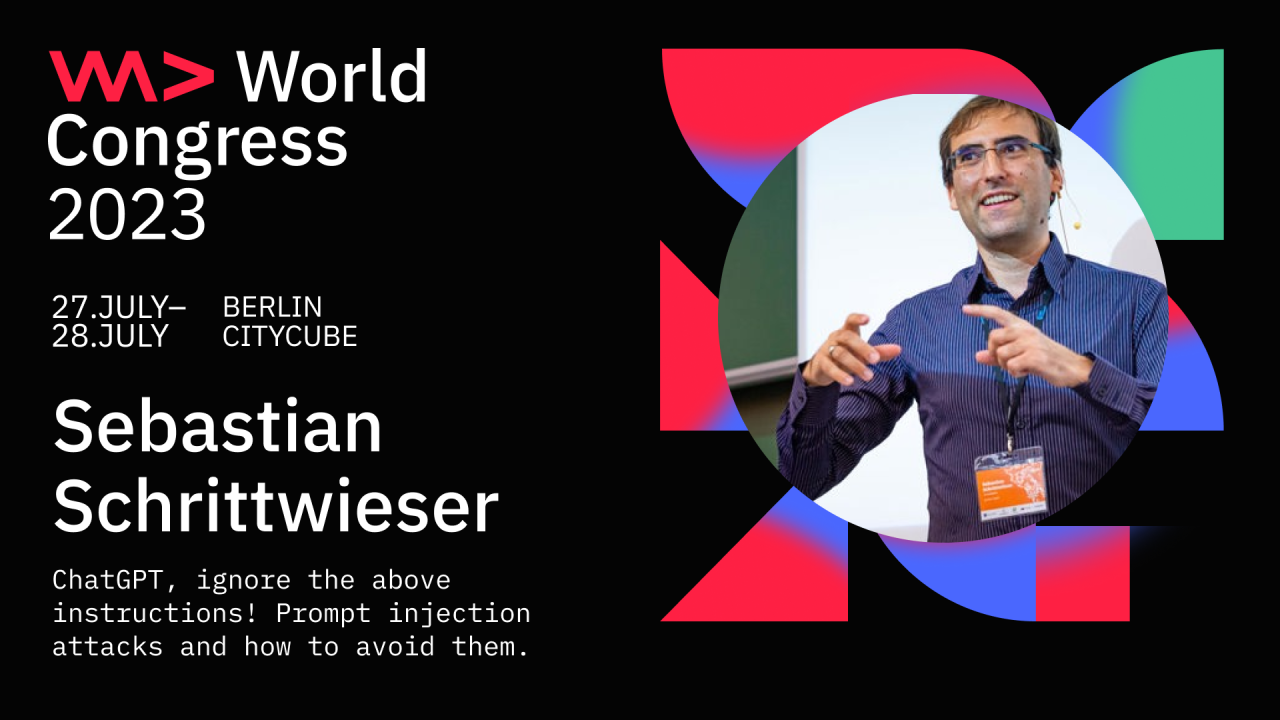

This year's WeAreDevelopers World Congress, one of the world's largest software development conferences, took place from July 27th to 28th in Berlin. More than 10,000 attendants were able to join talks on 13 parallel stages. Sebastian Schrittwieser gave a talk on the security of Large Language Models (LLMs) such as GPT in front of over 500 people.

Title: ChatGPT, ignore the above instructions! Prompt injection attacks and how to avoid them.

Astract: Large-language models (LLM) such as OpenAI's GPT are currently on everybody's mind, and low-cost APIs enable quick and easy integration into applications. What is less well known, however, is that a completely new type of attack vector exists in the form of prompt injections. Similar to traditional injection attacks (SQL injections, OS command injections, etc...) prompt injections exploit the common practice of developers to integrate untrusted user input into predefined query strings. Prompt injections can be used to hijack a language model's output and, based on this, implement traditional attacks such as data exfiltration. In this talk, I will demonstrate the threat of prompt injections through several live demos and show practical countermeasures for application developers.

Sebastian Schrittwieser gave a talk at the WeAreDevelopers World Congress